INTERVIEW: Engineering Professor and Caprica Science Consultant Malcolm MacIver

Mar1

ScriptPhD.com is extraordinarily proud to present our first ever Science Week! Collaborating with the talented writers over at CC2K: The Nexus of Pop Culture and Fandom, we have worked hard to bring you a week’s worth of interviews, reviews, discussion, sci-fi and even science policy. We kick things of in style with a conversation with Professor Malcolm MacIver, a robotics engineer and science consultant on the SyFy Channel hit Caprica. While we have had a number of posts covering Caprica, including a recent interview with executive producer Jane Espenson, to date, no site has interviewed the man that gives her writing team the information they need to bring artificial Cylon intelligence to life. For our exclusive interview, and Dr. MacIver’s thoughts on Cylons, smart robotics, and the challenges of future engineering, please click “continue reading.”

Questions for Professor Malcolm MacIver

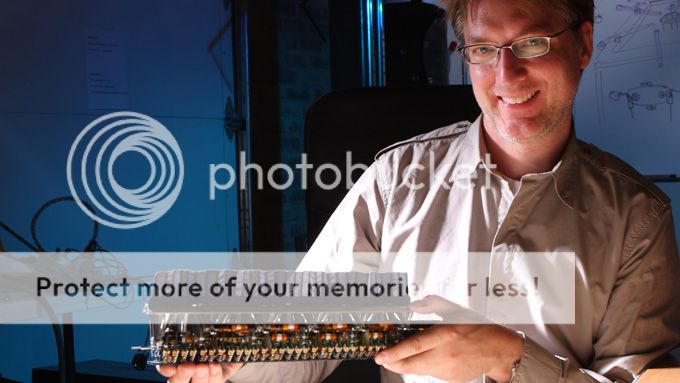

Professor Malcolm MacIver. Image courtesy of Northwestern University.

ScriptPhD.com: Your first Hollywood science experience involved consulting for a sequel of the 1980s cult classic Tron. What was it like to dive in from the Northwestern School of Engineering onto a set and work with screenwriters? What were some of your first impressions?

Malcolm MacIver: It was fascinating to learn a bit about how these huge expensive projects are structured. One specific thing I wanted to know more about was the role of writers in movies versus in TV. I had been told by friends in the industry that writers are typically less prominent players in movies than in top TV shows, were they can have considerable power. Consistent with this, we (the scientists who met with the Tron folks) were not introduced to the writers, who I believe were in the room taking notes, while we were introduced to all the other major players (director, producer, etc). I was also very curious to see how the group of scientists that I was a part of would interact with the movie makers. The culture gap is obviously huge, big enough for massive misunderstandings to blossom during superficially neutral discussions. During our meeting, our approach to the folks involved with the movie varied from inspired to less admirable attitudes. The less admirable attitudes seemed to arise from the mismatch between the importance scientists can place on their own endeavors, relative to their endeavor’s importance to story telling.

An anecdote I like along those lines is about how the astrophysicist Neil deGrasse Tyson complained to James Cameron that when Kate Winslet looked up from the deck of the Titanic, the stars in the sky were in the wrong position. I liked Cameron’s response, which was “Last I checked the film’s made a billion dollars.” People love the story, not the positions of the stars above the Titanic. We all tend to overemphasize the importance of the thing we are closest to, and it’s a problem that scientists need to be especially attuned to in these contexts.

SPhD: You are now a technical script consultant for Caprica, the television prequel to Battlestar Galactica, providing insight into things like artificial intelligence, robotics and neuroscience. To date, what has been one of your biggest contributions to a final written episode that otherwise wouldn’t have made it in to the storyline?

MM: One of the themes of my research is understanding the ways in which intelligence is not just all about what’s above your shoulders. Nervous systems evolved with the bodies they control—the interaction is extremely sophisticated, and stubbornly resists our attempts to understand it through basic science research or emulation in robotics. Representative of this fact is that we now have a computer that can beat the world chess champion—a paragon of an “above the shoulder” activity—while we are far from being able to robotically emulate the agility of a cockroach.

One of the things we’ve learned about the cleverness that resides outside the cranium is that things like the spinal cord are incredibly sophisticated “brains” operating sometimes without much input from upstairs. Through some old experiments that are better not gone into, scientists showed that animals can walk with little brain beyond the parts that regulate circulation and breathing and their spinal cord. This is because the spinal cord can do most of what we need for basic locomotion without any input. The point is that control of the body is distributed—it doesn’t just live in the brain. The lesson hasn’t been lost on robotics folks; for example, Rodney Brooks popularized an approach called “subsumption architecture” based on this idea. So – back to Caprica: For episode 2, “Rebirth,” the show needed some explanation for why the metacognitive processor was only working in one robot. The real reason, as we know, is that only one had Zoe in it; but the roboticists were being pressed by Daniel Graystone as to why it wasn’t working in others. The idea that I gave them, which they used, was that it was because this particular metacognitive processor had distributed its control to peripheral subunits. Because of this, it had become tied to one particular robot. It’s an idea straight out of contemporary neuroscience and efforts to emulate this in robotics.

A prototype for the first Cylon, as seen on the television program Caprica. ©NBC Universal, all rights reserved.

SPhD: To me, one of the most fascinating directions of the show is the idea that the first Cylon prototype was born of blood, in this case Zoe Graystone, and because of that, carries sentient emotions and thoughts. What is the fine line between a very smart, capable robot and an actual being?

MM: To vastly oversimplify things, you can imagine a gradation in “being” from a rock to a fully sentient self-aware entity. Some of the differentiators between the rock and you include things like the impact of others on how you think about yourself. For example, categorizing a rock as a particular kind of rock has no effect on the constitution of the rock. This isn’t so for self-aware creatures: once a person is labeled a child abuser, it actually affects the constitution of the person so labeled. People treat child abusers differently from non-child abusers. People who are categorized in this way suddenly see themselves differently; and those who were victims do so as well. The philosopher Ian Hacking, who I studied with during my Masters in Philosophy at the University of Toronto, called this the difference between “Human Kinds” and “Natural Kinds.” Another differentiator is that, for what you refer to as an “actual being,” there is a sense of self-interest in continued survival. Because of this, such a being is susceptible to being harmed, and may also therefore have what an ethicist would call “moral worthiness.” Moral worthiness in turn imposes certain obligations in regard to ethical treatment. For example, returning to the rock, we wouldn’t say we harm a rock when we explode it with dynamite, and we wouldn’t accuse the person who did the blowing up of unethical behavior (certain stripes of environmentalism would differ on this point). Unlike a rock, all animals exhibit an interest in self-preservation.

A very smart and capable robot can be imagined which is not affected by how it is categorized by others, and does not have an interest in self-preservation. So, it would fall short of at least those criteria for full-on “being.” But, there’s a lot more that can be said here, of course.

SPhD: What aspect of the Cylon machine and their story, which is now at the heart of Caprica, do you find the most captivating, either as a viewer or a robotics engineer?

MM: The scenario of our inventions eventually becoming so complex that they begin to have an interest in self preservation, and thus can be harmed (and so may start to be candidates for ethical treatment), is one I’ve thought a lot about in the past. It’s a key theme of the show, too. That’s one aspect that fascinates me about the show. The other is the play between the different kinds of being that Zoe has—from avatar-in-a-robot, to avatar-in-virtual reality, to “really real.” It’s a fun fugue on the varieties of being that raises good questions about the nature of existence and mortality, among others.

SPhD: In your latest post for the Science in Society blog, you explore the theoretical question of whether the United States (or any country, for that matter) could develop a Cylon type of war machine. Do you feel there is a distinct possibility the military might ever pursue this option and how might it impact warfare strategy?

MM: I’m going to explore that in my next few posts—and I’m still formulating my thoughts. In their initial development, a more realistic metaphor for how such robot warriors will work with us is something like a dumbed-down well-trained animal, willing to follow commands but without much of a sense of what to do if something gets in the way, and little recourse to things like flexibly generating new behaviors like a real animal does. But I can’t foresee any significant barriers to the development of autonomous robots with more of the attributes of a fuller kind of being I mentioned to above. I feel the more relevant question is whether this is on the order of 10 years away, or a hundred. Once it happens, the question will then be whether the global community recognizes military applications of this technology as a potential threat in need of careful control, like nuclear arms, or not. That’s all for now – for more you’ll have to visit my blog!

An SR4 smart robot from https://www.SmartRobots.com, currently in use by engineers, designers, developers, project managers, entrepreneurs, students, and businesses. It comes equipped with Linux, wireless web connectivity, and expandability, and costs about $6,400.

SPhD: During last year’s World Science Festival, we covered a really interesting panel called Battlestar Galactica: Cyborgs on the Horizon, which included a cross-section of engineers, ethicists and Battlestar actors discussing artificial intelligence, robotics, and the capabilities of modern engineering, which in some cases are very impressive indeed. In your opinion, what is one of the most significant or promising advance in robotics of the last few years?

MM: The maturing of what is sometimes called “probabilistic robotics.” This approach is what allowed the autonomous car Stanley to win the DARPA Grand Challenge, the challenge to have a vehicle drive itself with no human involvement over a challenging course in the desert. The basic idea is that while traditional robotics was concerned with making precise motions based on very well characterized inputs, what we need for robots to work in the real world is ways to handle the massive array of noisy and uncertain signals that are typically available to guide behavior. There are approaches from probability and statistics for doing this well. These approaches are integral to making robots have greater sensory intelligence. My own laboratory has developed a new kind of sensory robot using this approach, and it works very well.

SPhD: I have previously argued that television and film do more for promoting science by incorporating small, accurate pieces into an overarching story rather than basing an entire story on an unsustainable or far-reaching scientific concept. Thoughts?

MM: TV and film, when it is successful, is about telling a captivating story. The elements of good story telling (emotional connection to the characters, humor, insight into what it is to be human) have little in common with the elements of a good scientific concept (testability, explanatory power, coherence with the rest of what we know). So, yes I’d agree. Trying to do more than incorporate small bits is going to lead to your audience feeling like they are getting a lecture rather having a story shared with them, and no story teller should do that. Documentaries are an interesting hybrid, though—you need a good story, and if it’s about science, a good bit will be on the concepts. How to make that exciting and not spin out into yawn-provoking pedantry is quite the trick.

Malcolm MacIver, PhD, is a professor at Norwestern University with joint appointments in the Biomedical and Mechanical Engineering departments, and an adjunct appointment in the Department of Neurobiology and Physiology. He received a B.Sc. and MA at the University of Toronto, and a PhD in Neuroscience at the University of Illinois in Urbana-Champaign in 2001.

~*ScriptPhD*~

*****************

ScriptPhD.com covers science and technology in entertainment, media and pop culture. Follow us on Twitter and our Facebook fan page. Subscribe to free email notifications of new posts on our home page.